TECHNICAL SEO // DATA SCIENCE

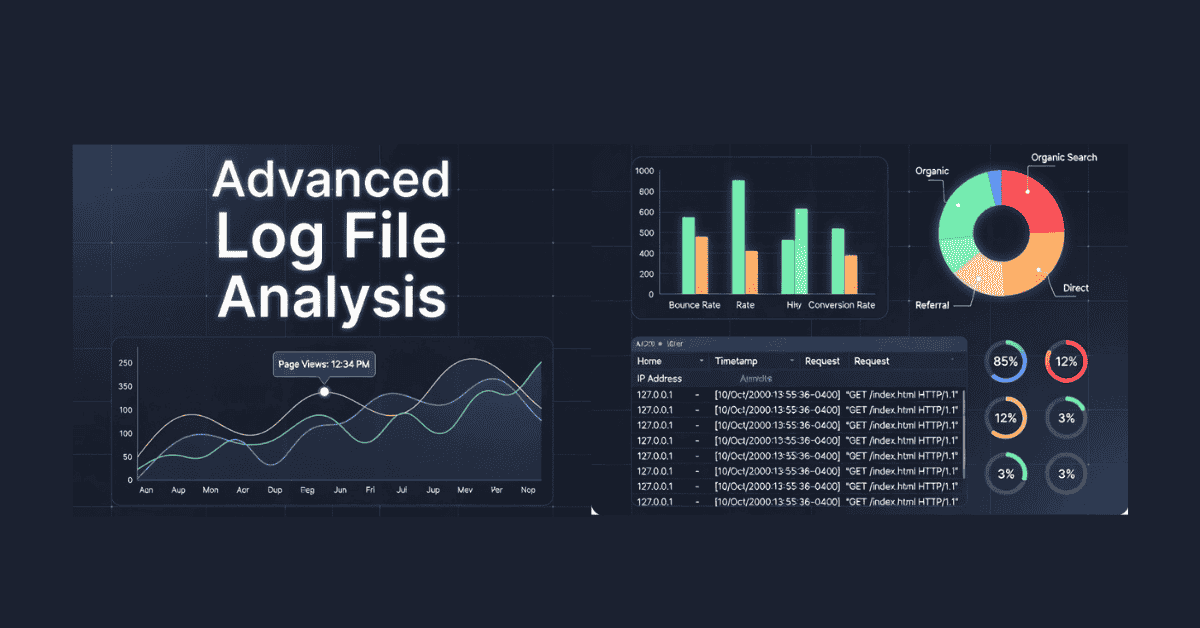

Advanced Log File Analysis for SEO

Unlocking Hidden Insights in 2026

In the high-stakes game of SEO, where algorithms evolve faster than ever, advanced log file analysis has emerged as a secret weapon for savvy marketers and site owners. If you're still relying solely on Google Search Console or basic crawlers, you're missing out on the raw, unfiltered truth about how search engines interact with your website. Log files—those behind-the-scenes records of every server request—reveal crawler behavior, indexing quirks, and performance bottlenecks that can make or break your rankings. As we hit 2026, with AI-driven search and stricter crawl efficiency standards, mastering this technique isn't just technical SEO; it's essential for optimizing crawl budgets, spotting issues before they tank your visibility, and staying ahead of the competition.

This guide will walk you through what advanced log file analysis entails, why it's more critical now than ever, and how to implement it effectively. We'll cover tools, key metrics, and pro strategies to turn data into actionable wins. Whether you're managing a massive e-commerce site or a content-heavy blog, these insights will help you fine-tune your SEO strategy for better results. Let's dig in and demystify the logs.

What Is Log File Analysis in SEO?

At its core, log file analysis involves examining your web server's access logs to track how bots like Googlebot, Bingbot, or even AI crawlers visit and interact with your pages. These files log details such as IP addresses, timestamps, user agents, requested URLs, HTTP status codes, and response sizes—essentially a play-by-play of every hit on your site.

Unlike tools that simulate crawls, logs provide first-party data on real bot activity. For SEO, this means uncovering issues like crawl traps (endless loops wasting resources), orphan pages (uncrawled content), broken links, redirect chains, and slow-loading sections. It's particularly valuable for large sites where crawl budget—the number of pages a bot will crawl in a session—is limited, ensuring high-value pages get prioritized.

Basic analysis might spot 404 errors or frequent crawls, but advanced takes it further: correlating data with rankings, analytics, and user behavior to inform broader strategies.

Why Log File Analysis Matters More Than Ever in 2026

Search engines have gotten smarter, and so have their expectations. In 2026, Google's algorithms penalize crawl inefficiency, not just errors—think wasted budget on low-value pages leading to slower indexing for important content. With AI indexing signals on the rise, logs help you understand how machine learning-driven bots behave, spotting patterns like reduced crawls post-updates or biases toward certain page types.

The rise of AI content and dynamic sites means more complexity: Logs reveal if bots are missing JavaScript-rendered elements or getting stuck in infinite scrolls. Plus, in an era of E-E-A-T and helpful content mandates, proving your site's technical health through efficient crawling builds trust signals. For e-commerce or news sites, it's a goldmine for GEO insights, like regional bot activity influencing local rankings.

Ignoring logs? You're flying blind—competitors using them can fix issues proactively, boosting visibility while you react to drops in traffic.

Key Metrics and Insights to Extract from Log Files

Advanced analysis goes beyond surface-level scans. Here's what to focus on:

- Crawl Frequency and Budget Allocation: See how often bots visit key pages. High frequency on non-essential URLs (like old redirects) wastes budget; redirect bots to priorities.

- Status Codes and Errors: Track 200s (success), 404s (not found), 301/302 redirects, and 5xx server errors. Chains of redirects or frequent 429s (rate limiting) signal problems.

- Bot Identification and Behavior: Verify user agents to distinguish real bots from fakes. Analyze paths: Are bots ignoring robots.txt or over-crawling low-value sections?

- Response Times and Sizes: Slow responses (>500ms) deter bots; large files eat bandwidth. Optimize for faster crawls.

- Crawled vs. Indexed Pages: Identify pages hit but not indexed—often due to thin content or duplicates.

Use tables for quick comparisons:

| Metric | What It Reveals | Actionable Insight |

|---|---|---|

| Crawl Frequency | Bot interest in pages | Prioritize updates for high-crawl, valuable URLs |

| Error Rates | Technical health | Fix 4xx/5xx to prevent de-indexing |

| User Agent Patterns | Bot types and fakes | Block malicious bots via .htaccess |

| Geographic Hits | Regional SEO opportunities | Tailor content for high-traffic regions |

Tools for Advanced Log File Analysis

You don't need to sift through raw logs manually—tools make it efficient:

- Screaming Frog Log File Analyser: Free for small files, processes millions of lines. Verifies bots, identifies crawled URLs, and exports reports.

- seoClarity Bot Clarity: Integrates log data with rankings and analytics for correlations. Spots errors pre-ranking impact.

- Other Options: OTTO SEO for automated insights, or custom scripts in Python for uber-advanced users. Access logs via hosting panels (e.g., cPanel), FTP, or APIs from providers like AWS.

For 2026, look for AI-enhanced tools that predict crawl patterns using machine learning.

Step-by-Step Guide to Performing Advanced Log File Analysis

- Access Your Logs: Download from your server (e.g., Apache's access.log). Aim for 30-90 days of data for trends.

- Filter and Clean Data: Use tools to isolate bot traffic (e.g., user agents containing "bot"). Remove human visits.

- Analyze Key Areas: Cross-reference with GSC for discrepancies. Use regex for pattern matching, like spotting parameter-heavy URLs wasting budget.

- Correlate with Other Data: Overlay logs with GA4 traffic or ranking tools to see if low crawls correlate with drops.

- Implement Fixes: Update robots.txt, fix errors, prune low-value pages, and monitor changes.

- Automate and Monitor: Set up scheduled pulls and alerts for anomalies.

Advanced Techniques for Deeper Insights

Take it up a notch:

- User-Agent Dissection: Parse strings for bot versions or mobile vs. desktop crawls to tailor optimizations.

- AI Crawler Analysis: Track emerging AI bots (e.g., for generative search) and their unique behaviors.

- Predictive Modeling: Use logs to forecast crawl needs post-site changes, integrating with AI tools for simulations.

- GEO and Segmentation: Break down by IP for location-based insights, enhancing local SEO.

Common Mistakes to Avoid

- Overlooking non-Google bots—Bing and others matter too.

- Ignoring data volume—start small to avoid overwhelm.

- Not acting on insights—analysis without fixes is pointless.

- Skipping correlations—logs alone miss the big picture.

Conclusion: Elevate Your SEO with Log File Mastery

Advanced log file analysis is the unsung hero of technical SEO in 2026, offering unparalleled insights into crawler realities that tools like GSC can't match. By spotting inefficiencies, optimizing budgets, and aligning with AI trends, you'll boost indexability, rankings, and user experience. Start by grabbing your logs today, pick a tool, and dive in— the data waiting there could transform your strategy. In a world of algorithmic shifts, those who decode the logs stay on top. Ready to uncover your site's hidden story?